During the Easter I made a push to finish up a project I’ve had running for a long while now (spare time is nothing I got an abundance of 🤣). A total remake of AureliaWeekly.com! It’s what I’ve been spending most of my spare time on since early fall 😵 It’s been worth it though! Not only is it faster, more cost effective and environmentally friendly, it’s also using the brand new Aurelia 2!

Short and sweet!

My (current) philosophy regarding side projects is: Keep them short and sweet! We all know feature creep and scope is a big problem for software projects. Even more for side projects I think. Sure, it’s fun to ideate all the bells and whistles. Then we start making small POCs, testing if they are feasible. And all off a sudden, six months have passed and all that’s done is 40% of the main feature and a whole slew of unfinished POCs of small features 🙃

Well, this project took ages. A lot of flip-flopping was done over technology choices, and a whole lot of Yak-shaving later, it’s finally finished about a year after the first datamigration I made. 😂

The Goal?

So, why did I want to rewrite “The Weekly”?

I wanted to make the solution slightly faster and most of all I wanted to change the architecture. The first version was actually hosted as an Azure Web App and it was actually a .NET Core project that was delivering the static web pages.

Also, the data layer needed an adjustment. Cosmos DB was the database I choose for v1, and although I love Cosmos, it’s just to expensive for such a small project as “The Weekly”.

The first port I made was just using the same front end code, but moved to being delivered as static web pages from an Azure Storage Account. Then halfway through the project, Aurelia 2 (still in pre-beta) was so stable I decided to rewrite the entire front-end using au2 just for kicks. Just between you and me, it made the project take a bit longer but made it so much more fun! 😁

Tl;dr;

✅ Everything I set out to do is achieved! Speed and performance improved by leaps and bounds, and the cost got totally slashed and is now less than a 100th of the operational cost of running v1!

🕸Front end written in au2

This part was an absolute pleasure! It’s still early days and during the rewrite au2 was still in pre-beta stage. Oddly enough this hardly cost me any extra time. I think I found one or two things that did not work as expected, and the positive thing is that after reporting my findings they were patched and the fix was released within hours! Huge thanks to all the people in the Aurelia Core Team who helped out with fixes, answering questions, discussing architecture and giving me feedback on the site during development 🙇♂️

I find au2 a real pleasure to work with. I think my favorites so far is the new start-up system and how powerful a tool DI have become. The new router is also spectacularly fun to work with and I hope you try it out soon and see for yourselves!

⚡Moving API to Azure Functions

The API for v1 was a .NET Core Web API that was in the same project as the front end. Convenient to host both Front end and API from the same web server because of CORS etc. But as I wanted to cut cost, I decided to move the API to an Azure Function App. Since the project took so long time for me there was a new version released of Azure Functions during my rewrite. And as I love new shiny things I of course decided to upgrade straight away 😆 This was pretty straightforward as it was just upgrading the version of .NET Core used and some additional tweaks to use a Startup class.

The Yak-shaving really began when I had finished most of the API and decided to get cracking on the endpoints that needs authentication. How does one handle that the best way in Azure Functions?

The option to use, what at the time was known as Easy Auth, seemed to be a nice and smooth way to handle authentication. It turned out that it was inflexible, requiring auth for all endpoints. This was not what I wanted, as almost all the functionality is public and don’t need authorization at all.

After some searching, I found AzureExtensions.FunctionToken which turned out to be a real gem 💎 This extension enables a per-endpoint authorization behavior, as well as handling claims etc. Big thanks to Vitaly Bibikov for this project 🤗

💾Rewriting the data layer

I really like Cosmos DB! So that was my pick for data layer for the first version. However, I don’t like the pricing model… And as this project started well before Cosmos DB came out with their free tier. 😞

Taking cost into consideration, there is no better alternative then Azure Storage Tables. So I started migrating my data over to Azure Storage and with just some small changes to the data structure, from the Cosmos version, it was easily accomplished.

My main concern was performance. And as much as I wanted a really cost efficient system, it also had to be snappy and responsive. On paper, Cosmos promises awesome performance and Azure Storage have other selling points except performance.

🚄Adding an Index with Azure Cognitive Search

To enhance response time when asking for all submissions I created a search index with Azure Cognitive Search. My Function API then asked the index for Submissions instead of talking directly to Azure Table Storage.

Azure Search used to be one of these things that cost an arm and a leg before, but they have a free tier now so that was totally doable with my cost constraints in mind!

🧪Time to perf test!

Fun thing happened! It turned out that the difference in response time for using Search, compared to querying the Azure Storage Table directly, was totally negligible! So I removed the search index solution, as it just added technical complexity.

However, keep in mind that this is a result of my data model and my usage patterns! If I had a more complex model or more advanced queries, then Azure Cognitive Search would be the better solution.

🌍Hosting

As v1 was served through a .NET Core app there was no other real solution than hosting it on an Azure Web App. For a static spa like “The Weekly”, this is just a bad move. To be able to use a certificate, the minimum required tier at the time of deploying v1 was the S1 App Service Plan. While this is not unreasonably expensive, it’s still a cost. Especially considering the site is a hobby project.

The more performant and cost effective solution was to use static web page hosting in Azure Storage. I’ve played around with it before and quite liked it. But what about equal speed for all users?

There is the option of setting up a secondary endpoint in a different regions. But then there’s need to have a load balancer in front of the Storage Accounts to route traffic to the correct endpoint. This could be solved using Azure API Management. This would also solve the HTTPS issue. Since a custom domain can be mapped to a static website, but it can’t use HTTPS (and this is after all 2020…)!

You can make your static website available via a custom domain.

– From the Azure Static websites documentation

It’s easier to enable HTTP access for your custom domain, because Azure Storage natively supports it. To enable HTTPS, you’ll have to use Azure CDN because Azure Storage does not yet natively support HTTPS with custom domains. see Map a custom domain to an Azure Blob Storage endpoint for step-by-step guidance.

If the storage account is configured to require secure transfer over HTTPS, then users must use the HTTPS endpoint.

🚀Next move – Azure CDN

As the section in the documentation states 👆 I decided to try CDN hosting. Turns out hosting on CDN is no problem on a budget either 😁 There are a few CDN solutions accessible from Azure as well, so you got options. I choose between Standard Microsoft and Standard Verizon. As it doesn’t cost anything to create the services I created both versions. I did a couple of tests looking at the download times in the network tab in my browser. My perception was that the Verizon CDN was more performant and I was about to remove the Miscrosoft version, when I tested the site with fastorslow.com. Turns out I was wrong. The Microsoft Standard CDN had a more even performance on a global scale! 🌎

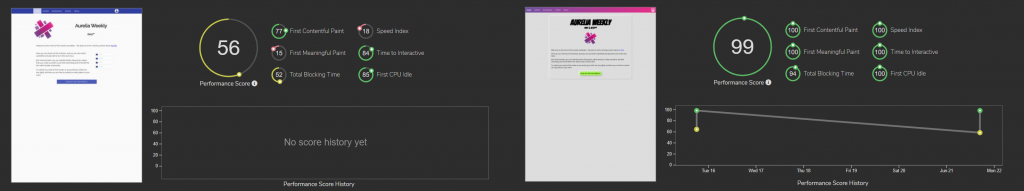

Speed difference comparing v1 to v2

As a fun comparison, below is the fastorslow score difference between v1 and v2 😄 That’s a substantial increase in performance right there! Total blocking time is the only offender, and that drop in later Aurelia 2 versions as this is only the pre-beta release of au2 🥳

The one drawback

The one problem with Azure CDN is that it doesnt support naked domains. Hence only www.aureliaweekly.com works right now and aureliaweekly.com reports a privacy issue. There’s a trick based solution to this via CloudFlare, and it’s actually free, I will see if I can get around to set that up during vacation. However it feels totally like yak-shaving, and shouldn’t be needed. There’s also a user voice item for this task but has no response since 2018?! Rumors has it some one is actually working on this over in Redmond, but if or when we’ll see a good solution to this… ¯\_(ツ)_/¯

💰Cost

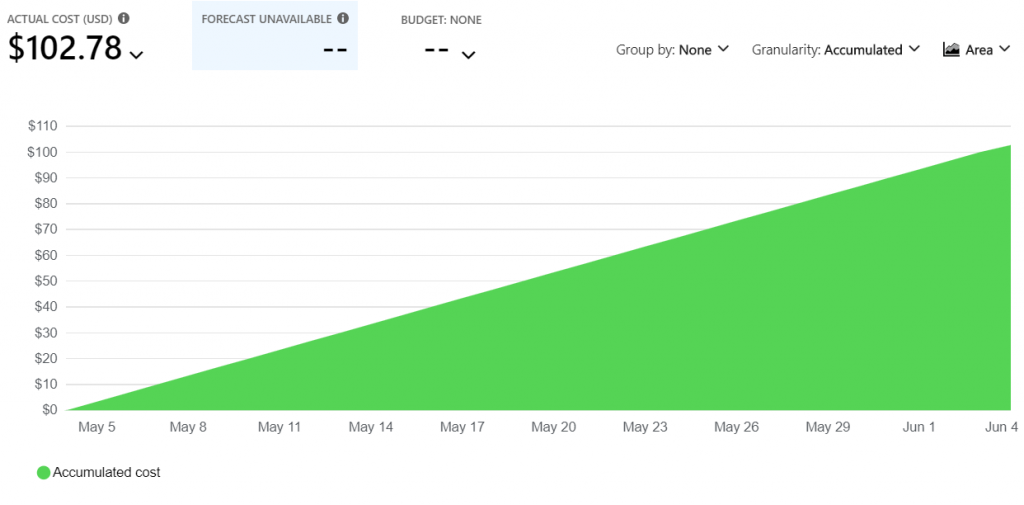

One of the major drivers of this project was to cut operational cost. So, did I manage to cut cost?

The cost was significantly reduced when the largest culprits from the previous architecture were replaced. When I started the project I suspected I’d cut cost to a tenth or even more if I was lucky. I must admit I hadn’t done any napkin math then, it was just a (bad) guesstimate.

The cost for v1 of “The Weekly” for the May – June billing period can be seen below. The cost is mostly due to Cosmos DB (~60$) and the App Service (~38$).

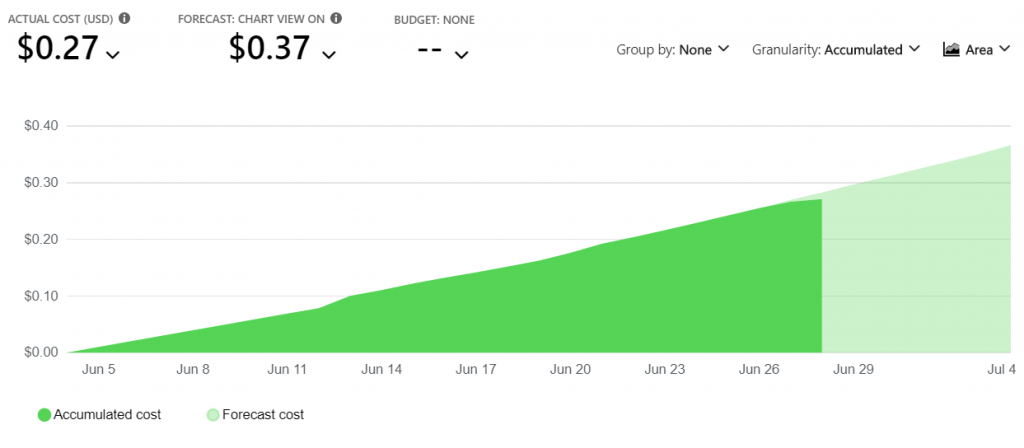

I’ve yet to run a full months worth of “real” usage for v2, but the below graph shows the June – July billing period, with estimated cost for the last few days. Even if the estimate of the last few days in June now doubles, I’d still say it’s an epic win 😆

The operational cost of v2 is thus less than a 200th of that of v1! If we want to be real picky and use the numbers from the graphs above, a 277th of v1 😁

To be fully transparent, the cost for the Active Directory isn’t included in the graph for v2 above, but that cost is about two pennies a month so that won’t really change anything.

🍃Environmental aspect

Measuring environmental impact isn’t easy and there is no hard figures to get from Azure afaik. But there’s little doubt v2 outshines v1 when it comes to sustainable software engineering.

Energy Proportionality

A measure of power consumed per work unit. Less is better 🙂

- Power consumed by v2 Azure Storage < power consumed by v1 Cosmos DB.

- Power consumed by v2 CDN < power consumed by Web App Shared Instance.

Networking

Reducing the amount of data and distance data must travel to reach consumer leads to a smaller carbon footprint.

Using Aurelia 2 helped reducing the payload significantly. As well as skipping some third party dependencies and writing that code myself. Payload of v1 was ~625kb vs the payload of v2 that clocks in super low at ~141kb! 🪁

With v2 using CDN’s there’s more endpoints hosting the application compared to the one Web App. However the nodes are have a higher utilization grade. An added benefit is that the CDN compression is really good. A benefit is also the reduction of distance between consumer and data!

For more a more in depth take on Green Software Engineering,

check out 🌱 Principles.Green

🔑Key takeaways

- Aurelia 2 is super fun to code in! I look forward to the beta-release that will be out soon™ and hope you will enjoy it as much as I do 🤯

- Side projects take a long time if you pivot technologies multiple times 😩

- Authentication is still not easy for a lot of MSFT technologies. It totally should be by now 🔐

- Azure Functions is a lot of fun ⚡😍

- Azure Storage Tables can be a great part of your data layer, given requirements of the data 💾

- Cloud costing is not easy. Even if you act according to best practice and recommendations, there is most certainly other ways to go about things that will be more cost effective (and probably almost as performant) 💸

Happy Coding! 😊

Thanks for the write-up. This was interesting to read. I especially like the performance gains in Au2.

YES! Au2 looks so promising, can’t wait for the beta release 😊

Tnx. Nice article to read.

🙇♂️

Thanks for the article and the note about the function token extension. I’ll try that. My hobby project is still aurelia v1, but has pretty much the same setup.

👍 However awesome the extension is, I just wish it wasn’t needed still… ¯\_(ツ)_/¯

Pingback: Using VS Code Tasks to Create Template Files

Pingback: Write Your Own File Uploader in Aurelia 2